Excuse me, but your proclivity is showing

Human failure to process information became the success of Brexit, and maybe Trump.

If recent history has taught us anything, it’s that pollsters are fallible, just like the rest of us. They assured us for months that British voters would opt to stay in the European Union—right up to the day they voted to leave. Betting markets never once gave Donald Trump a dog’s show of winning the Republican Party presidential candidacy. Even as his popularity surged, they stubbornly insisted the bubble was sure to burst. Why?

Because, in short, people prefer to believe what they prefer to believe. Most of us think we’ve figured out how the world works. That world view is generally a panorama, stitched together from a lifetime’s experience, learning, observation and belief. Those who aspire to leadership or influence understand this well. As Benjamin Franklin advised: “If you would persuade, appeal to interest and not to reason.”

He understood, as do marketers and politicians, that the secret to securing compliance is to massage our beliefs—reinforce our decision to invest in them, flatter us on our choices. Thus the major religions have been able to influence people’s thinking since the dawn of society itself. Thus Donald J. Trump defied the betting markets to emerge as the Republican candidate.

Our world view is our comfy spot, like a favourite couch. We feel safe there—oriented and secure. We like it just the way it is. When somebody comes along and suggests we shift it, or swap it for another one, we resist, because familiarity and stasis are comforts in a quicksilver world.

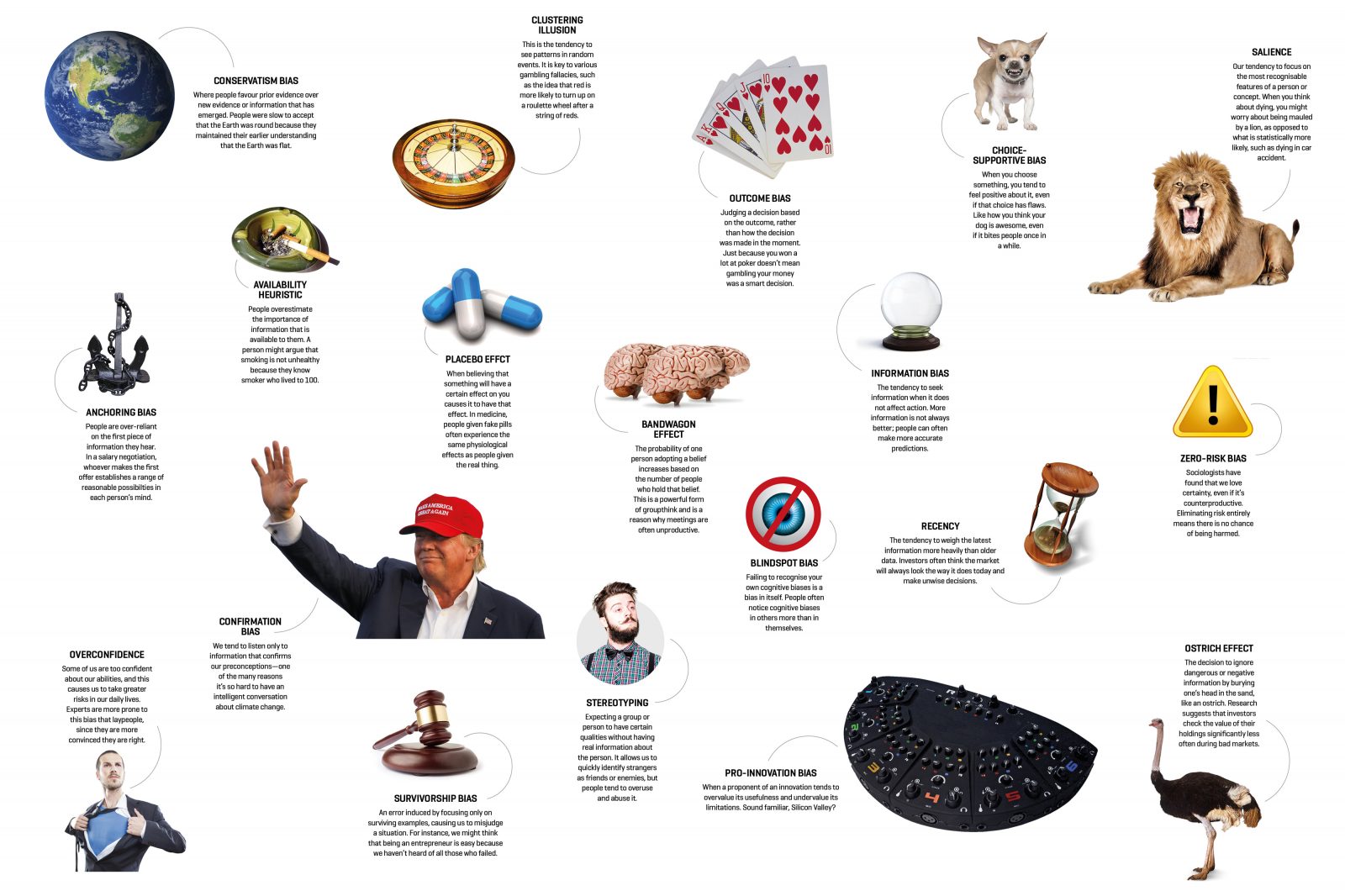

When new information challenges our beliefs, our first reaction is to doubt, resist or distort it. It’s called confirmation bias, and it wasn’t always a bad thing, say social anthropologists:

A palaeolithic ancestor who paused to cogitate the galloping threat of a strange new predator was unlikely to get the chance to pass that moment of hesitancy on to any offspring. The expeditious association between a sabre-tooth cat and a premature end is what psychologists call a heuristic—a kind of comprehension shorthand that allowed us to make swift, life-changing decisions based on a minimum of information.

Human existence today isn’t quite so contingent. We don’t have to make many snap life-or-death decisions because we have the benefit of education, and we’re briefed by volumes of up-to-the-minute information, reassured by empirical evidence. But that doesn’t mean we use it diligently. Researchers have compiled a whole dossier of other biases—at least three dozen discrete ways we might make up our minds without due regard for the facts.

An obvious one is stereotyping: a rather more inimical form of generalisation, in which some of us anticipate that certain people (or groups of people) will possess a trait or motivation. Not all stereotyping is overtly racist or sexist; it plays out in ways subtle and manifest. Blond hair, glasses, black clothing—all can excite lurking preconceptions we may have picked up through our social conditioning or experience. Complicating our assessments are the awkward singular exceptions that keep feeding people’s belief in a rule—for instance, I, an older Caucasian male, genuinely cannot dance (or shoot hoops).

Are you susceptible to groupthink? Conspiracy theories, always lurking beneath the mantle of popular culture, burst into peak paranoia soon after the arrival of the internet. Suddenly, conspiracists could effortlessly publish their suspicions to the world, within minutes. Some have assumed an unquestioned authenticity in the minds of millions: the 9/11 ‘truthers’ who believe the terror attacks were ordered by their own leaders, anti-vaccination campaigners convinced that immunising their children against measles, mumps and rubella puts them at risk of autism. The bandwagon effect describes that bias phenomenon, in which the more people who subscribe to an idea, the more people subscribe to it.

Another fascinating heuristic is the placebo effect, in which people who believe a medication or treatment they have received—even when it contained no active remedial element—has made them feel better. Experiments have reported patient response and satisfaction rates with a given drug tend to plummet once a newer, supposedly superior, successor is released. Others have shown that study subjects, when warned about side effects of a drug they believe they are about to be given, complain of exactly those maladies, even when they were given sugar pills instead. And more interesting still: trials record that people have become markedly more responsive to placebos over the past decade (but also to medication). That might say something about the evolution of our faith in medicine, or just about experimental design.

Between 1848 and 2011, 32 major earthquakes shook New Zealand. Two of them occurred when the moon was full, prompting a contention—accepted by many people—that future earthquakes can be predicted simply by mapping out the phases of the moon. But when the other 30 major earthquakes were plotted against the lunar calendar, it could be clearly seen that the relationship was entirely random—the earthquakes were scattered across all lunar phases. This is an example of illusory correlation, or the clustering illusion, in which we think we see links between two things, but only because we haven’t seen the rest of the data.

Some gamblers are prone to clustering fallacies: they believe that something that happens repeatedly over a short timeframe—say, a run of red on the roulette table—constitutes a sequence, so try to base a strategy on that perceived pattern. (It must be time to bet on black, because that red cluster must be due to run out). In fact, roulette is entirely random, and no such patterns exist.

By now, you may be congratulating yourself that you’ve been savvy to these foibles all along, too much of a critical thinker to succumb to their lure. Well, you probably just exhibited hindsight bias—or creeping determinism—claiming foresight after the event, even when you had no substantive basis for predicting it.

We may find it unsettling, but we all harbour biases of one kind or another, partly because information—and change—is coming at us so fast, from so many directions, that we have to resort to a few heuristics just to stay abreast of them. But it’s also down to the fact that we often rationalise, rather than reason, our way through everyday intellectual transactions. As social psychologist Jonathan Haidt put it in a 2001 research paper, ‘The Emotional Dog and its Rational Tail’: “One becomes a lawyer trying to build a case, rather than a judge searching for the truth.” We’ll wolf down evidence and contentions that reinforce our beliefs, then spit out the bits that challenge our views. Palatable news goes down in seconds, but we’ll spend hours gainsaying a contradiction.

But then, I might be committing blind-spot bias: failing to recognise my own cognitive biases.